Dominic Cronin's weblog

Websphere and Xalan fun for SDL Web 8

Of the small number of people who follow this blog, an unreasonably large proportion will be familiar with SDL Web 8, and the promise it holds for freedom from classpath hell. The new service-based architecture is a huge step forward, but we aren't out of the woods yet. I'm currently busy with an upgrade project where we're taking an interesting mix of web applications from SDL Tridion 2011 to SDL Web 8.

Web 8's much-vaunted REST-ful microservice approach was initially communicated as pretty much a drop-in replacement for the existing Content Delivery APIs. In practice, it turned out that the focus on backwards compatibility wasn't as clear as it might have been, and if you use JSPPage when invoking dynamic component presentations from a JSP page, you are out of luck, because this class doesn't have an implementation in the REST-ful facade. This is annoying, as I can't see any reason why it couldn't or shouldn't be made to work. The missing support is a "known issue", however I'm told there's not much appetite for fixing it. After all, goes the argument, we can use the in-process API, which does have JSPPage, so that's a workaround isn't it? Except that then we don't get the benefit of the dependency-free service architecture, and that, as I shall explain, is no small thing.

With the in-process API, of course, the idea is that all the necessary jars to do Tridion content delivery things have to be on your classpath. The general idea is simple enough, but in practice, we have to deal with the fact that there are several class-loaders arranged in a hierarchy, and each of these has their own classpath, although it's not always called that. At the top you've got the class loaders that belong to java itself. This means the boot classloader that loads the nuts and bolts of java itself, and also the one that works from the java CLASSPATH variable plus one for java extensions. And then lower down you have Websphere's own extensions classloader, some magic called the OSGi class loader gateway, and then the application's class loader and one for the module. Yes I know - it sounds pretty insane, but I didn't make it up. Have a look over here if you don't believe me!

So what kind of trouble did we get into, and how did we get out of it? Well we had all the Web 8 jars in a directory, and we'd deployed our application and set things up so the jars would be on the classpath. Keeping the jars outside the application has been the customer's preferred way of doing things for some years, and it's worked well, so our initial expectation was that things should "just work", but once we started testing, we started to see exceptions like:

[java.lang.ClassCastException: org.apache.xml.dtm.ref.DTMManagerDefault incompatible

with org.apache.xml.dtm.DTMManager

This is a bit of a weird one, because if you look up the sources, org.apache.xml.dtm.ref.DTMManagerDefault and org.apache.xml.dtm.DTMManager are actually defined in the same jar. How could they possibly be incompatible? Well as it turns out, it's possible for java to load two incompatible versions of the same jar simultaneously, from different jars.

If you look it up, this problem is about the Xerxes library, and it's associated serialiser jar. I think this comes about because Websphere uses Xerxes itself, (as do several other application servers) and because Tridion's own Content Delivery installation has these as third-party jars, any difference in the required versions will be problematic. Of course, it could happen with other libraries, but in practice, it's Xerces. (OK - so we also had similar issues with another application that uses JSTL.)

But let's start with "parent first" and "parent last". When working with the hierarchy of classloaders, the default method of loading a class is parent first. What this means is that when the module classloader needs to load a class, it first checks to see if its parent (the application classloader) can load the class. The application classloader then asks its parent, and so on all the way up. I've visualised this in the left hand diagram with the arrows going down, because in practice, what this means is that classes are made available from the top down. If the java classloaders have the class, that's what will be used throughout.

Parent last is the opposite arrangement. If the module classloader can find the class in its classpath, it loads it itself and doesn't trouble the parents with it. This effectively means that the lower the classloader, the higher priority its jars have, and hence the direction of the arrows in my right-hand diagram.

So to get rid of the ClassCastException, we flipped the configuration from Parent First to Parent Last. This works. It's what SDL recommend that you should do if you encounter these exceptions in your environment. But...

Well it turned out that our problems weren't over. Instead of a ClassCastException, now we had a ClassNotFoundException. I can't post it here, because all this happened a while ago and I'm writing this up later, but as I said earlier, it's all about Xerces. The problem in this case is that if a class is loaded by a classloader, it can't call a class that's loaded by a classloader that's lower in the hierarchy. Parent last leaves you rather more sensitive to this kind of problem, because you're deliberately loading classes lower down that might also be available further up the tree, and also might be expected by classes further up. In any case, even though the class is available, it can't be loaded, and you get a ClassNotFoundException.

In our case, we were able to solve the problem by moving the xalan and serialiser jars to Websphere's ws.ext directory, where they would be loaded by the Websphere Extensions classloader.

All this is a bit like the "dll hell" that we used to waste days on on Windows systems before the .NET framework came along. Sooner or later, the answer always ended up being that you needed to know far more than you wanted to about the nuts and bolts of how it all worked, and the various possible locations that Windows would look for a dll. "Classloader hell" is not much different. I've been able to avoid it for a long time just by breezily saying - "Oh yes - that's a Java problem". These days, I seem to be more engaged with Java than I used to be, so having to figure out classloader hell is probably fair game.

It's been a while since I linked to Joel Spolsky's classic: "The law of leaky abstractions", but this seems like a reasonable moment to do so. Joel's piece probably makes for far more entertaining reading than either this, or this, (both of which are pretty good) or any of the other detailed coverage that Google will turn up for you on the complexities of classloaders. My own description here has been deliberately only a sketch to give the big picture. I've skated over many details, missed others out entirely, and probably got a few things wrong (in which case, comments are welcome). My point is that in any given environment, there's a good chance you'll have to solve this kind of thing. It's frustrating, and it costs time that you probably feel like you don't have, but once you engage with the detail, you will find a solution. I'm not saying the solution outlined here is the best one. There may be other ways to get it working, and some of them may well be better.

To finish on a rather more upbeat note, we should all be happy to be moving slowly but surely towards the new architecture. Having to deal with these issues is actually a very welcome reminder of why we're investing in new architectures in the first place. The difficulty lies in the fact that you can't necessarily have a rebuild of all your legacy systems in the scope of an upgrade project, so we live with some things that aren't perfect, but we are moving in the right direction. Next time will be better!

Revisiting validateXml

Some time back in 2009 I blogged about validating Tridion's content delivery configuration files. It was a good idea then, and it's remained a good idea ever since. These days, we're dealing with SDL Web 8 and with the new micro-services architecture, you've got a lot of configuration files to get right. (On my fairly unambitious test system, running staging and live together, I just counted almost 80 configuration files.) Fortunately these seem to be reliably supported with schema files that are simply in each of the microservice folders that you copy during an installation.

Back when I first wrote the ValidateXmlFile powershell function, I'd left it rather unfinished. It was good enough to let me do some validations and detect problems, but it had a significant flaw, in that if a schema file was not present at the location indicated by the noNamespaceSchemaLocation attribute, it would simply not bother with validation. Considering that we're using an XmlReader to do the validation, this is a pretty reasonable design decision - after all the main purpose is to read in the XML, and validation is perhaps a bit of a side-effect. Fair enough, but it's a nasty hole in our defences, so now that I'm revisiting the technique, I've beefed up the script a bit so that it checks that the location is present and that there's a file in the location.

I've also made sure that the script does some pushd/popd to make sure that everything is nicely lined up when the location is relative to the file (which it generally is).

Here's the updated script:

function ValidateXmlFile {

param ([string]$xmlFile = $(read-host "Please specify the path to the Xml file"))

$xmlFile = resolve-path $xmlFile

"==============================================================="

"Validating $xmlFile using the schemas locations specified in it"

"==============================================================="

# The validating reader silently fails to catch any problems if the schema locations aren't set up properly

# So attempt to get to the right place....

pushd (Split-Path $xmlFile)

try {

$ns = @{xsi='http://www.w3.org/2001/XMLSchema-instance'}

# of course, if it's not well formed, it will barf here. Then we've also found a problem

# use * in the XPath because not all files begin with Configuration any more. We'll still

# assume the location is on the root element

$locationAttr = Select-Xml -Path $xmlFile -Namespace $ns -XPath */@xsi:noNamespaceSchemaLocation

if ($locationAttr -eq $null) {throw "Can't find schema location attribute. This ain't gonna work"}

$schemaLocation = resolve-path $locationAttr.Path

if ($schemaLocation -eq $null)

{

throw "Can't find schema at location specified in Xml file. Bailing"

}

$settings = new-object System.Xml.XmlReaderSettings

$settings.ValidationType = [System.Xml.ValidationType]::Schema

$settings.ValidationFlags = $settings.ValidationFlags `

-bor [System.Xml.Schema.XmlSchemaValidationFlags]::ProcessSchemaLocation

$handler = [System.Xml.Schema.ValidationEventHandler] {

$args = $_ # entering new block so copy $_

switch ($args.Severity) {

Error {

# Exception is an XmlSchemaException

Write-Host "ERROR: line $($args.Exception.LineNumber)" -nonewline

Write-Host " position $($args.Exception.LinePosition)"

Write-Host $args.Message

break

}

Warning {

# So far, everything that has caused the handler to fire, has caused an Error...

# So this /might/ be unreachable

Write-Host "Warning:: " + $args.Message

break

}

}

}

$settings.add_ValidationEventHandler($handler)

$reader = [System.Xml.XmlReader]::Create($xmlfile, $settings)

while($reader.Read()){}

$reader.Close()

}

catch {

throw

}

finally {

popd

}

}

Of course, what you really want is to be able to verify all your configurations in one go. Once the script is in your powershell $profile, you can put together some fairly simple command-line-fu to take care of that. I have all my microservices in one directory, which I guess is a pretty common pattern, so all I had to do was CD over there and execute the following:

gci -r -file -include *conf.xml | % {ValidateXmlFile $_}

By running this, I've also picked a couple of things that might be false positives. That aside, this is a real time saver if you're trying to solve issues. There's nothing like being able to eliminate a lot of the stupid typos from consideration all in one go.

Upgrading SDL Web Microservices - don't copy new over old

I've just broken my Tridion system. I had a perfectly good SDL Web 8.1.1 installation, and I've broken it upgrading to 8.5. This is really annoying. I'm gritting my teeth as I type this, and trying not to actively froth at the mouth. It's annoying for two reasons:

- The documentation told me to

- I got burned exactly the same way going form 8.1 to 8.1.1 and I don't seem to have learned my lesson.

So what exactly am I ranting about? Let me explain.

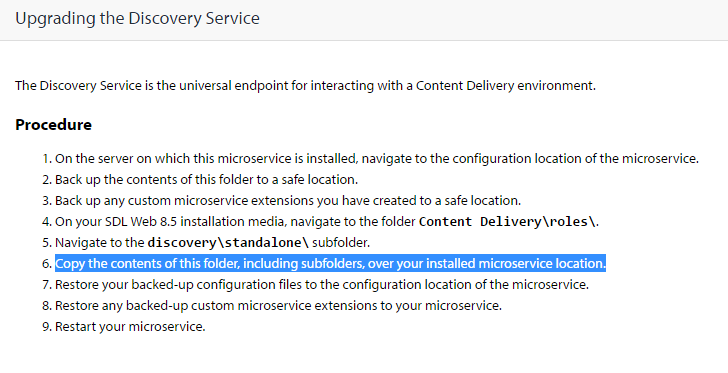

Take the discovery service as an example, but the same thing applies to the other services. Look at the documentation for Upgrading the Discovery Service. Check out the highlighted line below:

Doing this goes against the grain for anyone with experience of setting up servers. Copying a clean "known good" situation over a possibly dirty implementation and expecting it to work is asking for trouble. I'd never have written these instructions myself. What on earth was I thinking when I blindly followed them?

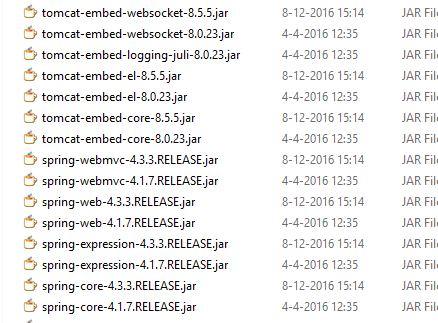

The service directory that you're attempting to overwrite contains a lib directory full of jars, and a services directory containing yet two more directories of jars. What you want to do is replace the jars with their new versions. This would be fine if all the jars had the same name as before, and there weren't any that shouldn't be there any more. As it is, the file names include their version numbers, so you end up with both versions of everything, like this:

This results in messages like "Class path contains multiple SLF4J bindings" and ensures that your services don't start. The solution is simple enough. Go to the various directories, and make sure that they contain only the jars from the 8.5 release.

Fortunately, I'm still feeling very positive about the folks at SDL in the wake of having received the MVP award again. I suppose I'll forgive them.... once I finish cleaning up the rest of my services.

Update: After posting this fairly late last night, it's now not even lunch time the following day, and I've already been informed that SDL have seen this, and are already taking action to update the documentation. That's pretty good going. Thanks

Checking your DXA/DD4T JSON in the SDL Web broker database

Over at the Indivirtual blog, I've posted about a diagnostic technique for use with the SDL Web broker database.

https://blog.indivirtual.nl/checking-dxadd4t-json-sdl-web-broker-database/

Enjoy!

Testing the SDL Web 8 micro-services

Over at blog.indivirtual,nl I've just blogged about testing the SDL Web 8 microservices.

Finding your way around the SDL Web 8 cmdlets

In SDL Web 8, there are far more things managed via Windows PowerShell than there used to be in previous releases of the product. On the one hand, this makes a lot of sense, as the PowerShell offers a clean and standardised way to interact with various settings and configurations. Still, not everyone is familiar enough with the PowerShell to immediately get the most out of the cmdlets provided by the SDL modules. In fact, today, someone told me quite excitedly that they'd discovered the Get-TtmMapping cmdlet. My first question was "Have you run Get-Command on the SDL modules?"

The point is that with the PowerShell, quite a lot of attention is paid to discoverability. Naming conventions are specified so that you have a good chance of being able to effectively guess the name of the command you need, and other tools are provided to help you list what is available. The starting point is Get-Module. To list the modules available to you, you invoke it like this:

get-module -listavailable

This will list a lot of standard Windows modules, but on your SDL Web 8 Content Manager server, you should see the following at the bottom of the listing:

Directory: C:\Program Files (x86)\SDL Web\bin\PowerShellModules ModuleType Version Name ExportedCommands ---------- ------- ---- ----------------

Binary 0.0.0.0 Tridion.ContentManager.Automation {Clear-TcmPublicationTarget, Get-TcmApplicationIds, Get-Tc...

Binary 0.0.0.0 Tridion.TopologyManager.Automation {Add-TtmSiteTypeKey, Add-TtmCdEnvironment, Add-TtmCdTopolo...

This gives you the names of the available SDL modules. From here, you can dig in further to list the commands in each module, like this:

get-command -module Tridion.TopologyManager.Automation

This gives you the following output:

CommandType Name ModuleName

----------- ---- ----------

Cmdlet Add-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Add-TtmCdTopology Tridion.TopologyManager.Automation

Cmdlet Add-TtmCdTopologyType Tridion.TopologyManager.Automation

Cmdlet Add-TtmCmEnvironment Tridion.TopologyManager.Automation

Cmdlet Add-TtmMapping Tridion.TopologyManager.Automation

Cmdlet Add-TtmSiteTypeKey Tridion.TopologyManager.Automation

Cmdlet Add-TtmWebApplication Tridion.TopologyManager.Automation

Cmdlet Add-TtmWebsite Tridion.TopologyManager.Automation

Cmdlet Clear-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Clear-TtmMapping Tridion.TopologyManager.Automation

Cmdlet Disable-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Enable-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Export-TtmCdStructure Tridion.TopologyManager.Automation

Cmdlet Get-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Get-TtmCdTopology Tridion.TopologyManager.Automation

Cmdlet Get-TtmCdTopologyType Tridion.TopologyManager.Automation

Cmdlet Get-TtmCmEnvironment Tridion.TopologyManager.Automation

Cmdlet Get-TtmMapping Tridion.TopologyManager.Automation

Cmdlet Get-TtmWebApplication Tridion.TopologyManager.Automation

Cmdlet Get-TtmWebsite Tridion.TopologyManager.Automation

Cmdlet Import-TtmCdStructure Tridion.TopologyManager.Automation

Cmdlet Remove-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Remove-TtmCdTopology Tridion.TopologyManager.Automation

Cmdlet Remove-TtmCdTopologyType Tridion.TopologyManager.Automation

Cmdlet Remove-TtmCmEnvironment Tridion.TopologyManager.Automation

Cmdlet Remove-TtmMapping Tridion.TopologyManager.Automation

Cmdlet Remove-TtmSiteTypeKey Tridion.TopologyManager.Automation

Cmdlet Remove-TtmWebApplication Tridion.TopologyManager.Automation

Cmdlet Remove-TtmWebsite Tridion.TopologyManager.Automation

Cmdlet Set-TtmCdEnvironment Tridion.TopologyManager.Automation

Cmdlet Set-TtmCdTopology Tridion.TopologyManager.Automation

Cmdlet Set-TtmCdTopologyType Tridion.TopologyManager.Automation

Cmdlet Set-TtmCmEnvironment Tridion.TopologyManager.Automation

Cmdlet Set-TtmMapping Tridion.TopologyManager.Automation

Cmdlet Set-TtmWebApplication Tridion.TopologyManager.Automation

Cmdlet Set-TtmWebsite Tridion.TopologyManager.Automation

Cmdlet Sync-TtmCdEnvironment Tridion.TopologyManager.Automation

I'm sure you can see immediately that this gives you a great overview of the possibilities - probably including some things you hadn't thought of. You can also see how they follow the standard naming conventions. But now that you know what commands are available, how do you use them? What parameters do they accept? What are they for?

It might sound obvious, but indeed, the modules come with batteries included, including built-in help. So, for example, to learn more about a command, you can simply do this:

help Get-TtmMapping

or if your Unix roots are showing, this does the same thing:

man Get-TtmMapping

The output looks like this:

NAME

Get-TtmMapping

SYNOPSIS

Gets one or all Mappings from the Topology Manager.

SYNTAX

Get-TtmMapping [[-Id] <String>] [-TtmServiceUrl <String>] [<CommonParameters>]

DESCRIPTION

The Get-TtmMapping cmdlet retrieves a Mapping with the specified Id.

If Id parameter is not specified, list of all Mappings will be returned.

RELATED LINKS

Add-TtmMapping

Set-TtmMapping

Remove-TtmMapping

REMARKS

To see the examples, type: "get-help Get-TtmMapping -examples".

For more information, type: "get-help Get-TtmMapping -detailed".

For technical information, type: "get-help Get-TtmMapping -full".

For online help, type: "get-help Get-TtmMapping -online"

By using these few simple tools, you can accelerate your learning process and find the relevant commands easily and quickly. Happy hunting!

Getting started with SDL Web 8 and the discovery service

Well it's taken me a while to get this far, but I'm finally getting a bit further through the process of installing Web 8. My first attempt had foundered when I failed to accept the installer's defaults - it really, really wants to run the various services on different ports instead of by configuring host headers!

Anyway - this time I accepted the defaults and the content manager install seemed to go OK. (I suppose I'll set up the host header configuration manually at some point once I'm a bit more familiar with how everything hangs together.) So now I'm busy installing and configuring content delivery, and specifically the Discovery service. I got as far as this point in the documentation, where it tells you to run

java -jar discovery-registration.jar updateThis didn't work. Instead I got an error message hinting that perhaps the service ought to be running first. So after a minute or two checking whether I'd missed a step in the documentation, I went to tridion.stackexchange.com and read a couple of answers. Peter Kjaer had advised someone to run start.ps1, so I went back to have a better look. Sure enough, in the Discovery service directory, there's a readme file, with instructions for starting the service from the shell, and also for running it as a service. (This also explains why I couldn't find the Windows service mentioned in the following step in the installation documentation.)

Anyway - so I tried to run the script, and discovered that it expects to find JAVA_HOME in my environment. So I added the environment variable, and but then when I started the script it spewed out a huge long java exception saying it couldn't find the database I'd configured. But... nil desperandum, community to the rescue, and it turned out to be a simple fix.

So with that out of the way, I ran the other script - to install it as a service, and I now have a working discovery service... next step: registration

New Tridion cookbook article: Recursive walk of Tridion tree

I'm still trying to get the important parts of my Tridion developer summit talk online. With a code-based demo like that, sharing the slides is pretty pointless, so I'm putting the code on-line where ever it makes sense. So far this has been in the Tridion cookbook. Here's the latest

https://github.com/TridionPractice/tridion-practice/wiki/Recursive-walk-of-Tridion-tree

The thing that really triggered me to get this on-line was that someone had recently asked me if it was possible to query Tridion to find items that were local to a publication rather than shared from higher in the BluePrint. With the tree walk in place, this becomes almost trivial. (I'm not saying that there aren't better ways to get the list of items to process, but the tree walk certainly works.)

So having got the items into a variable following the technique in the recipe, finding the shared items becomes as simple as:

$items | ? {$_.BluePrintInfo.IsShared}

But it might be more productive to throw all the items into a spreadsheet along with the relevant parts of their BluePrint Info:

$items | select Title, Id, @{n="IsShared";e={$_.BluePrintInfo.IsShared}}, `

@{n="IsLocalized";e={$_.BluePrintInfo.IsLocalized}} `

| Export-csv blueprintInfo.csv

Am I the only one that finds this fun? It's fun, right! :-)

New Tridion Cookbook article: Set up publication targets

In my "Talking to Tridion" session at the Tridion Developer Summit this year, one of the things I demonstrated was a script to automatically set up publication targets in Tridion. I'm now finally getting round to putting the talk materials on-line, and this one seemed a good candidate to become a recipe in the Tridion Cookbook. So if you are feeling curious, get yourself over to Tridion Practice and have a look. The new recipe is to be found here.

Moving your Tridion databases

As part of setting up my new laptop, I installed MSSQL and obviously I wanted to have my existing Tridion databases available. My Tridion image had previously not had a database - I had that running natively on the old laptop, but I'd decided to go with a more conventional approach and run it in the image with Tridion. This transition had a couple of interesting moments, and hence this post.

Moving the databases and getting MSSQL security working again.

The moving part was fairly simple. I just detached all the databases, and copied the pairs of .MDF and .LDF files over to the new location and attached them.

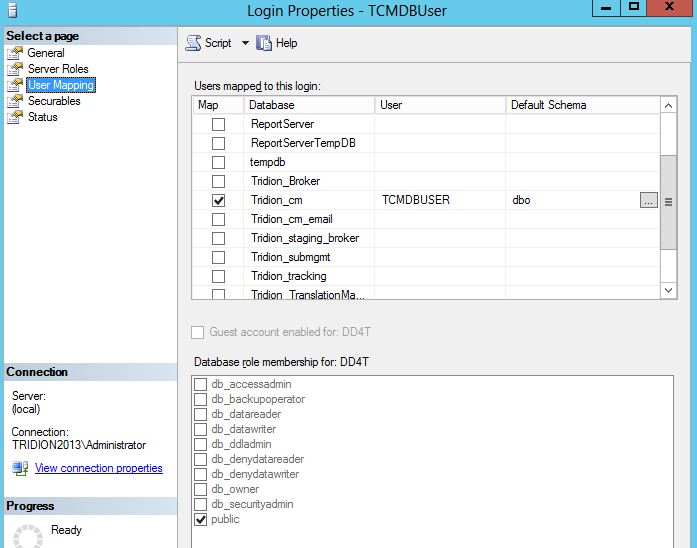

Once you've done this, you'll find that in each database, if you look under Security/Users, you'll find a User with a name that matches the login that you use in your Tridion configuration... for example: TcmDbUser. Unfortunately, this isn't enough. There are (at least) two kinds of User. The one you can see in your database (this is strictly a "database principal") can't be used for logging in. For that you need a "server principal", and these are to be found in your MSSQL instance under Security/Logins. For everything to work correctly, there needs to be a mapping between the database principal and the server principal. You can see this if you look in a correctly configured system. Right click on the login and open the properties, and open up the user mapping page. It should look something like this:

So what we're aiming for is to have a matching Login and database User, with the same name. Creating a Login is easy enough, but if you try to add the mapping by hand in the User Mapping page, it will fail, because it wants to create a database user, and a database user with the same name already exists. (You could delete it, but then you'd have a world of pain trying to figure out all the properties and settings that the Tridion database scripts take care of automatically. I'm not even sure if support would ever talk to you again if you did this.)

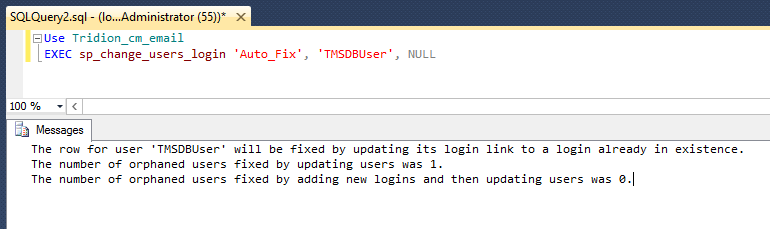

Fortunately, there's a better way. You can do it via SQL with various ALTER USER commands, but then you are going to be deeper into the security features of MSSQL than any normal person ought to wish for. (In this context, DBA's aren't normal, but then they won't be needing to read this blog post, will they?) However, you don't need to figure out all that SQL, because there's a system procedure (sp_change_users_login) that does exactly what you want. As long as your Login and User have the same name, you can just use the Auto_fix method, like this:

Remembering the database settings you'd forgotten about.

So I had all the MSSQL stuff correctly set up, or so I thought, but when I started to try to use the Tridion GUI, I kept getting error notifications in the Message centre.

A network-related or instance-specific error occurred while establishing a connection to SQL Server. The server was not found or was not accessible.

Verify that the instance name is correct and that SQL Server is configured to allow remote connections. (provider SQL: Network interfaces error, 26 - Error locating Server/Instance Specified)

This was pretty odd. I could see most of the GUI working fine, and publications were listed OK, but other lists weren't populated. I speculated that it might only be lists served via service calls that had problems, but when I checked the core service, it was able to list out my entire system. I spent quite some time fiddling with various settings and checking that named pipes etc., were configured correctly, before I eventually got smart enough to check T-REX again.In an old post from 2011, Rick Pannekoek suggested that a similar problem might be caused by the outbound email configuration.

Sure enough - I'd forgotten that outbound email has it's own database configuration (if I'd ever known it - the installer sets it all up and mostly you never need to look there, unless you're actually doing outbound email). Anyway - I certainly hadn't realised that this would break the Content Manager's GUI.

A quick visit to:

C:\Program Files (x86)\Tridion\config\OutboundEmail.xml

and then a bit of fiddling with decrypting and re-encrypting (there are scripts for this that come with the installer), and I had my system in fully working order.